- Valley Recap

- Posts

- 🌐 Internet's Next Phase: Web 4.0 👯♀️ Still feeling AIS5 Buzz 💵 Bay Area Startups Collectively Secured $6.4B in May

🌐 Internet's Next Phase: Web 4.0 👯♀️ Still feeling AIS5 Buzz 💵 Bay Area Startups Collectively Secured $6.4B in May

🌐 Internet's Next Phase: Web 4.0

Last week's AIS5 recap covered five signals that came out of our summit. Today’s will be more of a deep-dive, unpacking what "The Agentic Internet" actually means for the infrastructure stack and why it showed up as an active theme all day.

Jeremiah Owyang of Blitzscaling Ventures opened our lineup of speakers at AI INFRA Summit 5 by naming what he sees as the current phase: Web 4.0, the Agentic Internet. Autonomous AI agents are now deciding, transacting, learning, and replicating with minimal human input. The companies building ahead of this shift are producing 10X revenue per employee relative to traditional competitors.

Jeremiah Owyang, GP at Blitzscaling Ventures

That number got attention. But the part that stuck with the infrastructure crowd was what comes after the slide deck. If agents are making decisions, calling APIs, moving data, and triggering workflows on their own, the compute pattern underneath looks nothing like what most systems were designed for.

Traditional AI workloads follow a request-response pattern. A user sends a prompt, the model returns a result. Agentic workloads don't work that way. An agent receives a goal, breaks it into steps, calls tools, reads data, makes decisions, and sometimes spins up follow-on tasks without waiting for a human to approve each one. That changes the demand profile in a few important ways:

Compute becomes persistent. Agents don't spin up and shut down per query. They run continuously, maintaining state across long workflows. That turns burst demand into baseline load.

Token consumption compounds. Agentic workflows consume dramatically more tokens per task than single-turn interactions. Reasoning steps, tool calls, and multi-step chains all add up. This connects directly to Signal 3 from the AIS5 recap - inference economics are shifting because of how agents use the stack.

Security moves into the execution layer. When an agent can read files, make network calls, and access credentials on its own, the question of what it's allowed to do becomes an infrastructure problem. Sandboxing, policy enforcement, and audit trails have to be built into the runtime, not bolted on afterward.

Several AIS5 talks and sessions reinforced the same point from different angles. Nicola Tan from AMD laid out the case for rethinking enterprise infrastructure specifically around agentic AI. Luke Norris from Kamiwaza argued for AI orchestration as a core requirement of the agentic enterprise. The Token Economy panel, with representatives from Tencent Cloud and VAST, dug into what it actually costs when agents run continuously and consume tokens at scale. And in the breakout room, Michael Roy from AMD presented a session on why agents don't care what GPU they run on and what that means for teams designing heterogeneous compute environments.

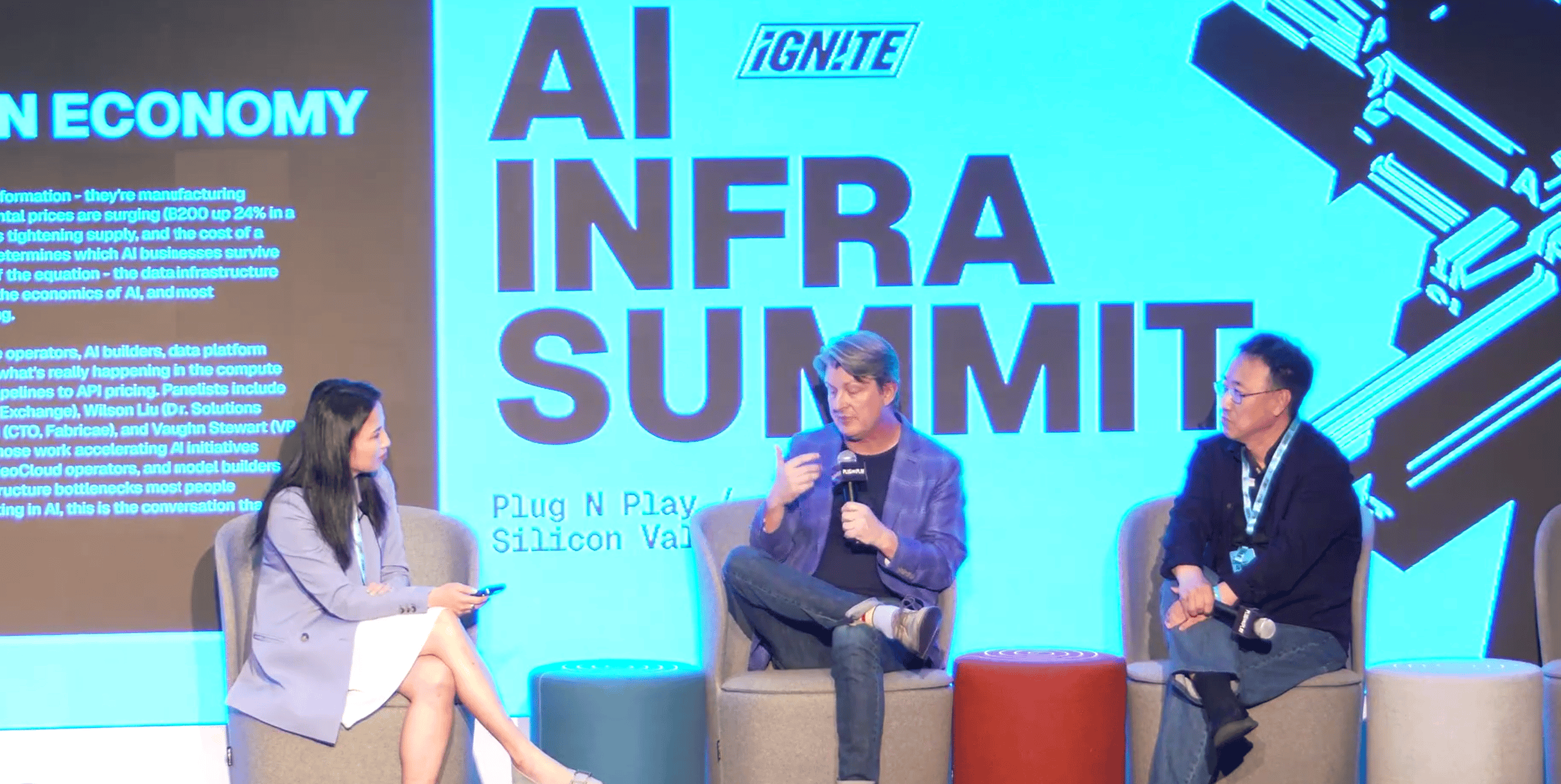

Carmen Li, Vaughn Stewart and Wilson Liu

The common thread across all of them was the same. The infrastructure assumptions that work for single-turn AI break down when the workload is autonomous, persistent, and compounding.

Planning for agentic workloads means planning for compute that runs continuously, consumes tokens at a compounding rate, and requires governance built into the infrastructure itself. The organizations moving on this now are treating agents as persistent processes, not occasional tools.

Every organization sits somewhere on the AI culture spectrum. The ones building for agentic workflows are pulling ahead. The ones still treating AI as a feature are watching the gap widen.

The infrastructure underneath has to keep up.

That's what AI INFRA SUMMIT exists to support.

See you at AIS 6 December 4th, San Francisco.

Secure your spot with Super Early Bird Tickets below

AI INFRA 5 // STILL FEELING THE BUZZ

// UPCOMING

Bay Area Startups Collectively Secured $6.4B in May Week 1

The first week of the month closed with $6.4B in fundings. 90% of the total was in seven megadeals, the largest being $4B to The Deployment Company (fka DeployCo.) That's the joint venture between OpenAI and several PE firms to sell AI tools to their portfolio companies. Anthropic has also announced a similar arrangement, and Google is reportedly in talks with other PE firms as well.

The Cerebras IPO will be the first in the AI arena in 2026, ahead of the SpaceX, Anthropic and OpenAI triad. And demand is high - the company plans to raise their IPO price range from the initial $115-$125 per share to $125-$135 after drawing orders for more than 20X the number of shares available.

For startups raising capital: Stay on top of who's raising, who's closing and who's investing with the Pulse of the Valley weekday newsletter. Click through to get more detail on investors and executives, including email addresses for both. Founders get the newsletter, database and alerts for just $7/month ($50 value). Check it out and sign up here.

Follow us on LinkedIn to stay on top of SV funding intelligence, and the companies, investors and executives impacting the startup ecosystem.

Early Stage:

RadixArk closed a $100M Seed, an AI infrastructure company building open, scalable systems for training, deploying, and running frontier models.

Standard Intelligence closed a $75M Series A, an aligned AGI lab building a general agent through full video pre-training on computer use.

Tessera Labs closed a $60M Series A, a multi-agent AI platform that transforms how enterprises modernize complex ERP systems and data.

Nace.AI closed a $21.5M Seed, an applied AI company building a specialized intelligence powered by Metamodel designed to run companies.

Altara closed a $7.6M Seed, building the scientific intelligence platform that helps frontier industries accelerate R&D through manufacturing.

Growth Stage:

Sierra Technologies closed a $950M Series E, helps businesses build better, more human customer experiences with AI.

Astranis closed a $450M Series E, builds advanced satellites for high orbits providing dedicated, secure networks.

Corgi Insurance closed a $160M Series B, an AI-native, full-stack insurance carrier built for startups.

Deep Infra closed a $107M Series B, a purpose-built cloud inference platform for high-throughput AI.

Anello Photonics closed a $25M Series B, develops integrated photonic system-on-chip technology.

vCluster is a Kubernetes infrastructure platform focused on virtual clusters, multi-tenancy, and GPU infrastructure orchestration for AI clouds and enterprise AI factories. The company helps operators deliver hyperscaler-style infrastructure experiences without multiplying physical clusters.

What they deliver

Virtual Kubernetes clusters with isolated control planes

Multi-tenant GPU infrastructure management

Self-service environments for AI and ML teams

Kubernetes orchestration across public and private cloud

Infrastructure automation for AI cloud providers and enterprise AI factories

CEO Lukas Gentele (left) moderating a panel at AIS5

Why it matters

AI infrastructure teams are running into the limits of traditional Kubernetes operations. Dedicated clusters for every team create overhead, waste GPU capacity, and slow deployment cycles. vCluster approaches the problem differently by virtualizing Kubernetes environments on shared infrastructure while preserving isolation, performance, and governance. The result is higher GPU utilization, faster provisioning, and infrastructure that scales more like a hyperscaler than a traditional enterprise environment.

Who they serve

AI cloud providers, platform engineering teams, enterprises operating internal AI factories, and infrastructure operators managing GPU-heavy workloads at scale.

vCluster joined us at AI Infra Summit 5, where conversations around Kubernetes orchestration, tenant isolation, GPU scheduling, and hyperscaler-grade infrastructure were front and center. As AI infrastructure moves from experimentation into industrial-scale deployment, the operational problems vCluster is focused on are quickly becoming core bottlenecks across the industry.

Explore vCluster to see how they are helping teams scale AI infrastructure more efficiently.

Your Feedback Matters!

Your feedback is crucial in helping us refine our content and maintain the newsletter's value for you and your fellow readers. We welcome your suggestions on how we can improve our offering. [email protected]

Logan Lemery

Head of Content // Team Ignite

Apple’s Starlink Update Sparks Huge Earning Opportunity

Apple just secretly added Starlink satellite support to iPhones through iOS 18.3.

One of the biggest potential winners? Mode Mobile.

Mode’s EarnPhone already reaches 490M+ users that have earned over $1B, and that’s before global satellite coverage. With SpaceX eliminating "dead zones," Mode's earning technology can now reach billions more in unbanked and rural populations worldwide.

Their global expansion is perfectly timed, and investors like you still have a chance to invest in their pre-IPO offering at $0.50/share.

With their recent 32,481% revenue growth and newly reserved Nasdaq ticker, Mode is one step closer to a potential IPO.

Please read the offering circular and related risks at invest.modemobile.com. This is a paid advertisement for Mode Mobile’s Regulation A+ Offering.

Mode Mobile recently received their ticker reservation with Nasdaq ($MODE), indicating an intent to IPO in the next 24 months. An intent to IPO is no guarantee that an actual IPO will occur.

The Deloitte rankings are based on submitted applications and public company database research, with winners selected based on their fiscal-year revenue growth percentage over a three-year period.