- Valley Recap

- Posts

- ✍️AI Workloads Are Being Rewritten💰Bay Area Startups Collectively Secured $193B

✍️AI Workloads Are Being Rewritten💰Bay Area Startups Collectively Secured $193B

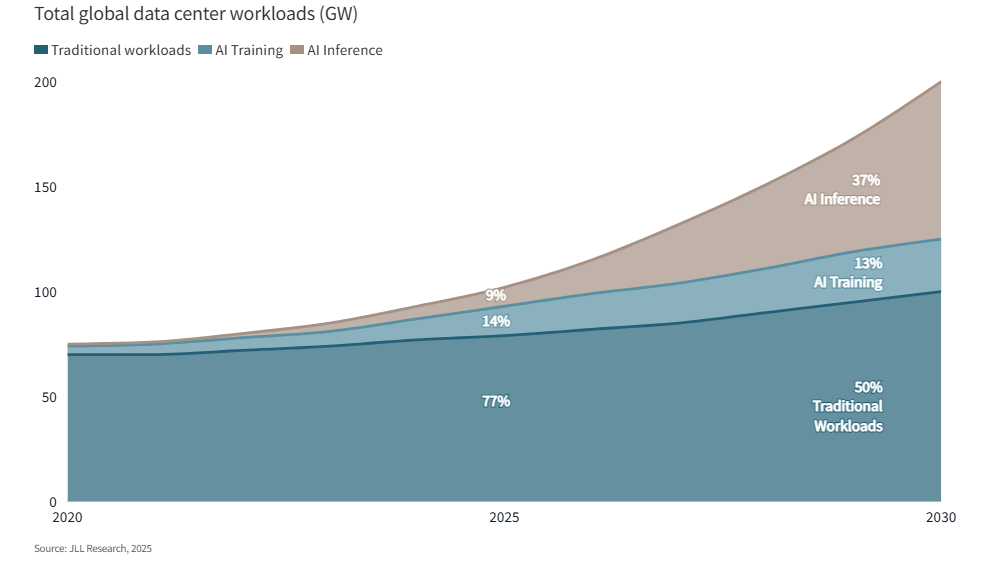

For the past several years, AI infrastructure planning has revolved around training. The largest models demanded enormous clusters, enormous power draw, and enormous capital outlays, and the industry treated those training runs as the central event around which the rest of the ecosystem revolved. That emphasis is now shifting as the economics of AI move from model creation toward model usage.

By the middle of this decade, the majority of compute demand is expected to come from inference rather than training. Estimates across the industry point to a crossover point around 2027, when the cumulative workload of answering queries, generating content, and powering software agents exceeds the compute devoted to creating new frontier models. The infrastructure priorities that follow from that shift look very different from those that defined the early model race.

Training rewards raw scale. Large clusters maximize floating-point throughput, and the objective is to compress months of compute into weeks. Inference rewards efficiency and responsiveness instead. Each user interaction triggers a sequence of token generation events that must occur quickly and at extremely low cost, since those tokens accumulate across millions or billions of requests. The question operators increasingly ask is not how many FLOPS a system can deliver, but how much it costs to produce each token delivered to an end user.

That economic pressure exposes a major inefficiency in the current deployment model. Many production services rely on models originally designed for training runs with hundreds of billions of parameters, which makes them expensive to operate when used repeatedly at scale. Running those models for everyday inference can quickly produce negative margins once energy, hardware depreciation, and infrastructure costs are accounted for. In response, companies are shifting toward model distillation and quantization strategies that compress capabilities into far smaller architectures.

The emerging consensus places many production deployments in the range of seven to eight billion parameters, where models retain strong performance while dramatically reducing memory footprint and compute requirements. Teams at large platforms have reported that these smaller models often occupy a practical “sweet spot,” delivering usable intelligence without the operating cost profile associated with frontier-scale networks.

This change in workload also exposes a different architectural constraint. Inference performance depends less on pure compute throughput and more on memory bandwidth and latency, since generating tokens requires repeated access to model weights and cached context. As a result, the economics of inference increasingly favor systems designed around fast memory access and efficient caching rather than simply adding more accelerators.

Some operators have begun describing this model as a kind of token warehouse. Context is loaded and prepared once, and the system focuses on decoding tokens as efficiently as possible across many requests. The objective is to reduce repeated computation and spread the cost of preparation across a large number of responses.

As inference demand grows, infrastructure strategy follows the economics of tokens rather than the spectacle of training runs. The next phase of AI deployment will be defined less by the largest models ever trained and more by the systems capable of delivering useful tokens quickly, reliably, and at a price that makes the business work.

GTC Week is almost here and San Jose is about to become the epicenter of the AI world.

Thousands of builders, founders, engineers, and investors will be in town, and the best conversations won’t happen on the expo floor — they’ll happen after hours.

That’s why we’re hosting a series of curated evening events during GTC week designed for high-signal networking with the people actually building the future of AI infrastructure.

⚡ Founders building the next generation of AI platforms

⚡ Infra leaders scaling trillion-parameter compute

⚡ Operators solving the real constraints of power, cooling, and clusters

⚡ Investors backing the AI infrastructure race

Whether you're a builder, operator, or investor — these gatherings are where the real conversations happen. Below are a few of the featured events happening this week. Spots are limited, so secure your place early.

Bay Area Startups Collectively Secured $193B in 2026 YTD

March has started out slow with just two megadeals – Ayar Labs, $500M and Science Corporation, $230M - in contrast to February's massive fundings. Out of February's $163B total, 98.7% of the funds - $161B - went out via twenty-six megadeals. And out of that $161B, just three companies received 95% of the investment - OpenAI with $116B, Anthropic with $30B and Waymo with $16B. Two months into the year, 2026 YTD now stands at $193B, over 90% of the total funding raised in the full year of 2025.

For startups raising capital: Stay on top of who's raising, who's closing and who's investing with the Pulse of the Valley weekday newsletter. Founders get the newsletter, database and alerts for just $7/month ($50 value). Check it out and sign up here.

Follow Link Silicon Valley on LinkedIn to stay on top of what's happening in and around startup funding, venture capital and key players in the startup ecosystem.

Early Stage:

Cylake closed a $45M Seed, building a complete, AI-native, data-driven cybersecurity platform for the world’s largest and most regulated institutions.

Guild.ai closed a $44M Series A, provides all-in-one AI that helps builders do their AEC office work, from report writing to 2D to 3D renders.

JetStream Security closed a $34M Seed, an enterprise AI governance and AI security platform built to control, secure, and scale agentic AI in production.

DiligenceSquared closed a $5.4M Seed, enables leading investors and corporates to make critical investment decisions with confidence through AI-powered market research and commercial due diligence.

Vor Systems closed a $3M Pre-Seed, an AI-enabled transaction platform built for complex energy deals.

Growth Stage:

Ayar Labs closed a $500M Series E, transforming AI infrastructure with the industry’s first proven co-packaged optics (CPO) solution.

Science Corporation closed a $230M Series C, focused on solving some of neuroscience's hardest questions and most serious medical unmet needs.

Portside closed a $65M Private Equity round, a leading provider of advanced software solutions for the global aviation industry.

Polares Medical closed a $50M Series C, a structural heart company developing a novel transcatheter therapy designed to treat patients suffering from mitral regurgitation.

ArmorCode closed a $16M Series B, helps enterprises manage security risk and governance across today's heterogeneous technology environments.

Brandon Omoregie, Co-Founder of IgniteGTM, will be leading a new weekly segment in the newsletter focused on open roles across the AI Infrastructure landscape.

In addition to his work with Ignite, Brandon is the founder of The Offr Group, where he helps high growth technology companies hire exceptional talent and connect experienced operators with the right opportunities.

What Brandon Will Cover

Each week Brandon will share a curated set of open positions across companies in our ecosystem, including startups, infrastructure providers, and growth stage AI companies.

What Readers Can Expect

• High signal job openings across AI, infrastructure, and startups

• Roles sourced directly from founders and hiring managers

• A simple way for companies to share open positions with the community

Why This Matters

The AI infrastructure ecosystem is scaling quickly. New companies are launching, teams are expanding, and the demand for experienced operators continues to grow. This segment highlights where that growth is happening and where talent is needed most.

Want to Share an Open Role?

Companies in the ecosystem can submit roles to be featured in upcoming editions.

Connect with Brandon:

https://www.linkedin.com/in/brandonomoregie/

Your Feedback Matters!

Your feedback is crucial in helping us refine our content and maintain the newsletter's value for you and your fellow readers. We welcome your suggestions on how we can improve our offering. [email protected]

Logan Lemery

Head of Content // Team Ignite

Want to get the most out of ChatGPT?

ChatGPT is a superpower if you know how to use it correctly.

Discover how HubSpot's guide to AI can elevate both your productivity and creativity to get more things done.

Learn to automate tasks, enhance decision-making, and foster innovation with the power of AI.